Scalability engine guidelines for SolarWinds products

See this video: Enterprise-Class Scalability for the Orion Platform.

See this video: Enterprise-Class Scalability for the Orion Platform.

Your SolarWinds Platform installation consists of a main polling server (SolarWinds Platform Web Console and the main polling engine), and the SolarWinds Platform database server. The polling engine gathers device statistics and stores the information on the SolarWinds Platform database server. The main polling server reads the stored information from the SolarWinds Platform database server.

Your main polling engine polls a definite number of elements depending on the SolarWinds Platform product. Too many elements on a single polling engine can have a negative impact on your SolarWinds Platform server. When the maximum polling throughput on a single polling engine is reached, the polling intervals are automatically increased to handle the higher load. To keep default polling intervals, you need to add polling capacity.

What is a scalability engine?

"Scalability engine" is a general term that refers to any server that extends the monitoring capacity of your SolarWinds installation, such as Additional polling engines (APEs), Additional web servers (AWS), or High Availability (HA) backups.

How do I know that I need to scale my SolarWinds Platform product?

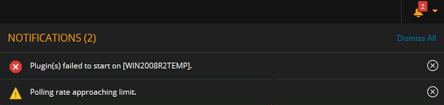

When the polling capacity on a polling engine is getting close to the limit or is exceeded, SolarWinds Platform products notify you about it.

- See Notifications in the SolarWinds Platform Web Console.

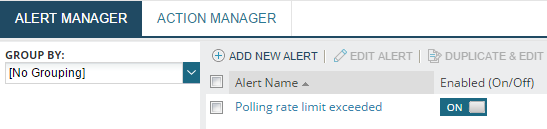

- Review your alerts. If the Polling rate limit exceeded out-of-the-box alert is enabled, the alert sends an email and adds an entry to All Active Alerts.

Select the scalability option suitable for your environment and deployed SolarWinds Platform products

Review the scalability options and compare them with options available for SolarWinds Platform products you have deployed.

| Scalability | Supported products | When to select this option |

|---|---|---|

| Additional polling engine (APE) | All SolarWinds Platform products except for ETS and EOC |

To increase the polling capacity of your deployment, deploy APEs.

|

| Additional polling engine (APE) for selected products |

|

Additional polling engines are updated to a licensing-controlled version.

For selected products, the Additional polling engine license is included in the product license. Install an Additional polling engine. During the evaluation period, you will be able to see an APE evaluation license in the License Manager. After the evaluation period, no extra license will be displayed in the License Manager because it is included in your product license. You cannot stack these licenses. |

| Additional web server | All SolarWinds Platform products | To improve the performance of your SolarWinds Platform Web Console by load-balancing a large number of users or in secured environments where you have your SolarWinds Platform products behind a firewall, deploy Additional polling engines. » Learn more |

| Stacking licenses |

|

If you have enough resources on your polling engine server, apply multiple Additional polling engine licenses on the server to increase its polling capacity. » Learn more |

|

SolarWinds Platform Collector (Collector) |

|

To securely monitor offices over low bandwidth and high latency connections or small offices in remote locations, deploy an SolarWinds Platform Collector. » Learn more |

|

Remote Orion Poller for Additional polling engines (ROP) |

|

Remote Office Pollers have reached End of Sale. Customers under maintenance are still supported.

To deploy your SolarWinds Platform product in numerous remote locations without scaling up your installation, deploy a Remote Office Poller for Additional polling engine (ROP, mini-poller). » Learn more |

| High Availability | All SolarWinds Platform products except for ETS |

To implement failover protection for your SolarWinds Platform server and Additional polling engines, deploy High Availability. |

Deploy the selected scalability option

Review scalability requirements and find out more about scalability options.

Recommendations for large deployments with 10 or more Additional polling engines

-

Reassign nodes from the main polling engine to Additional polling engines (APEs) so that the main polling engine can focus on core functions, such as running the SolarWinds Platform Web Console, alerting, reporting, APE management, and more.

-

Reconfigure devices to send flow, syslog, and SNMP trap information to your APEs for further offloading.

Centralized deployment with Additional polling engines

Centralized Deployment with APEs polls data locally in each region and the polled data is stored centrally on the database server in the primary region. All licenses are shared in a Centralized Deployment. Use this deployment if your organization requires centralized IT management and localized collection of monitoring data.

Why deploy APEs centrally?

Users can view all network data from the SolarWinds Platform Web Console in the Primary Region where the main SolarWinds Platform server is installed.

Users can log in to a local SolarWinds Platform Web Console if an Additional polling engine is installed in a secondary region.

With Centralized Deployment, you can:

- Add, delete, and modify nodes, users, alerts and reports centrally, on the Main SolarWinds Platform server.

- Scale all installed SolarWinds Platform products. Scaling one SolarWinds Platform product increases the capacity of the other SolarWinds Platform products. For example, installing an APE for NPM also increases the polling capacity for SAM.

- Specify the polling engine that collects data for monitored nodes and reassign nodes between polling engines.

All Key Performance Indicators (KPIs), such as Node Response Times, are calculated from the perspective of the polling engine. For example, the response time for a monitored node in Region 2 is equal to the round trip time from the APE in Region 2 to that node.

For additional information on Centralized Deployment, see the SolarWinds Platform Scalability Tech Tip.

Requirements for APEs

The latency (RTT) between each SolarWinds Platform Additional polling engine and the database server should be below 200 ms. Degradation may begin around 100 ms, depending on your utilization and the size of your deployment. In general, higher latency will impact larger deployments more than smaller deployments.

Ping the SolarWinds Platform SQL Server to find the current latency and ensure a reliable static connection between the server and the regions.

-

Make sure that your environment meets the requirements for Additional polling engines in Multi-module system guidelines. Extra large environment requirements include:

-

Amazon Web Service: m5.xlarge

-

Microsoft Azure: D4s_v3

-

On premise:

- Quad core processor or better

- 32 GB RAM

- Storage: 150 GB, 15,000 RPM

- Windows Server 2022, 2019, or 2016, Standard or Datacenter Edition

-

-

Make sure that you have opened all necessary ports:

Additional polling engines (APEs) have the same port requirements as the main polling engine. The following ports are the minimum required for an Additional polling engine to ensure the most basic functions.

Port Proto-

colService/

ProcessDirection Description 161 UDP SolarWinds Job Engine Outbound The port used by the Additional polling engine (APE) to query for SNMP information on the device and to send it to the APE. 162 UDP SolarWinds Trap Service Inbound The port used by the APE for receiving trap messages from devices. 1433

TCP

SolarWinds Collector

ServiceOutbound The port used for communication between the APE and the SolarWinds Platform database. 1434 UDP SQL Browse Service Outbound The port used for communication with the SQL Server Browser Service (SolarWinds Platform database) and the APE to determine how to communicate with certain non-standard SQL Server installations. Required only if your SQL Server is configured to use dynamic ports. 5671

TCP

RabbitMQ Outbound The port used for SSL-encrypted RabbitMQ messaging from the Additional polling engine to the main polling engine.

17732 TCP

SolarWinds Certificate Management Service Internal only The local port used for secure communication between the certificate management clients and certificate management service. 17733 TCP SolarWinds Job Engine v3 Internal only The local port used by the SolarWinds Job Engine v3 for communication with the collector. 17777

TCP

SolarWinds Information

ServiceBidirectional The port used for communication between the Additional polling engine and the main polling engine.

-

Use the SolarWinds Installer to deploy the Additional polling engine.

How to deploy?

-

Make sure that your environment meets the requirements for APEs.

- Use the SolarWinds Installer to deploy the Additional polling engine.

Distributed deployment with main and Additional polling engines in regions

In a distributed deployment, each region is licensed independently, and data is polled and stored locally in each region. Scale each region independently by adding APEs. You can access monitoring data from each region in a central location with the Enterprise Operations Console (EOC).

SolarWinds Enterprise Operations Console must be installed and licensed if you want to view aggregated data from multiple SolarWinds Platform servers in a distributed deployment.

Why deploy Additional polling engines in a distributed environment?

With distributed deployment you can:

- Use local administration to manage, administer, and upgrade each region independently.

- Create, modify, or delete nodes, users, alerts, and reports separately in each region.

- Export and import objects, such as alert definitions, Universal Device Pollers, and SAM application monitor templates between instances.

- Mix and match modules and license sizes as needed. For example:

- Region 1 has deployed NPM SL500, NTA for NPM SL500, UDT 2500, and 3 APEs

- Region 2 has deployed NPM SLX, SAM500, UDT 50,000, and 3 APEs

- Region 3 has deployed NPM SL100 only and 3 APEs

EOC 2.0 and later leverage a function called SWIS Federation to query for specific data only when needed. This method allows EOC to display live, on-demand data from all monitored SolarWinds Sites. and does not store historical data.

How to deploy?

-

Make sure that your environment meets the requirements for APEs.

-

Use the SolarWinds Installer to deploy the Additional polling engine.

Additional web servers

Deploying an Additional web server might be helpful in the following cases:

-

The number of users logged in to the SolarWinds Platform Web Console at the same time is close to 50.

-

SolarWinds Platform Web Console is having performance issues.

The SolarWinds Platform Web Console performance depends on the performance of the computer where you open the browser. See Browser requirements in the latest system requirements.

How to deploy?

-

Make sure you have all ports required Additional polling engines open:

Port Protocol Service/Process Direction Description 80

TCP

World Wide Web Publishing Service Inbound Default Additional polling engine port. Open the port to enable communication from your computers to the SolarWinds Platform Web Console.

If you specify any port other than 80, you must include that port in the URL used to access the SolarWinds Platform Web Console. For example, if you specify an IP address of 192.168.0.3 and port 8080, the URL used to access the web console is

http://192.168.0.3:8080.443 TCP IIS Inbound The default port for https binding. 1433

TCP

SolarWinds Information Service Outbound The port used for communication between the SolarWinds Platform server and the SQL Server. Open the port from your SolarWinds Platform Web Console to the SQL Server.

5671 TCP RabbitMQ Outbound The port used for SSL-encrypted RabbitMQ messaging from the Additional web server to the main polling engine.

17732 TCP

SolarWinds Certificate Management Service Internal only The local port used for secure communication between the certificate management clients and certificate management service. 17777

TCP

SolarWinds Information Service Outbound SolarWinds Platform module traffic. Open the port to enable communication from all polling engines (both main or additional) to the Additional web server, and from the Additional web server to polling engines.

-

Use the SolarWinds Installer to deploy the Additional polling engine. See Installing Additional polling engines.

Learn more: Optimize the performance of SolarWinds Platform Web Console.

Remote Office Pollers

To deploy your SolarWinds Platform product in numerous, remote locations when you do not need to scale up your installation, use a Remote Office Poller for Additional polling engine (ROP, mini-poller).

Select a Remote Office Poller by the number of elements you need to poll:

- ROP250 polls up to 250 elements.

- ROP1000 polls up to 1000 elements.

How to deploy?

-

Make sure that all deployed products support this option.

-

Make sure that your environment meets the requirements for APEs.

-

Use the SolarWinds Installer to deploy Remote Office Pollers. follow the steps for installing Additional polling engines.

SolarWinds Platform Collectors

SolarWinds Platform Collector (Collector) is a lightweight distributed polling engine that you can use to monitor devices in your environment agentlessly through WMI and SNMP.

Why deploy?

- Collectors use the Agent technology to communicate with the SolarWinds Platform

- Collectors do not need a direct connection to the database

- Collectors are easy to deploy in remote locations, thanks to their simplified architecture

- Collectors can poll/cache over unreliable networks (store up to 24 hours with no connection to the polling engine)

Requirements

| Requirement | Description |

|---|---|

| Supported operating systems |

|

| Ports to open |

Open only one port. The Collector agent is NAT friendly, supports authenticated proxy traversal. You can thus easily deploy the Remote Collector in your DMZ, branch office locations, and even in the cloud, with very few or no firewall policy changes. |

| SolarWinds Platform products that support SolarWinds Platform Collector |

|

| Other requirements |

|

What is supported?

SolarWinds Platform Collector (Collector) does not provide full support for all metrics polled by Additional polling engines. For more details, see SolarWinds Platform Collector support.

| Product supporting Collector | Supported features |

|---|---|

|

NAM 2020.2.1 (IPAM, NCM, NPM, NTA, UDT, VNQM) |

Common SolarWinds Platform metrics

NPM

|

| SAM (node-based licenses only) |

|

Collector Scalability limits

-

Maximum of 100 Collectors per polling engine

-

Maximum 1000 elements per Collector

-

Maximum 48,000 elements supported per polling engine by all SolarWinds Platform Collectors.

Upgrade/migration details

- Upgrades occur over the same single port the SolarWinds Platform Collector uses to Communicate to the SolarWinds Platform server

- Plugins are deployed automatically to SolarWinds Platform Collector as new SolarWinds Platform products are installed

- When you upgrade the main SolarWinds Platform server, Collectors are upgraded automatically

- You can move nodes between SolarWinds Platform Collector and/or APEs

How to deploy?

-

Deploy a SolarWinds Platform Agent using the agent-initiated communication, for example using the Add node wizard or manually.

- Agents deployed on Additional polling engines or the Main SolarWinds Platform server cannot be used as SolarWinds Platform Collectors.

- Converting an Agent installed on the main polling engine or on a Additional polling engine is not supported.

- Collectors cannot poll nodes polled via an Agent.

- Collectors require that the Agent uses agent-initiated communication.

-

Promote the node to a Remote Collector:

-

In the SolarWinds Platform Web Console, click Settings > All Settings > Manage Agents.

-

Select the node hosting the future Collector and click More Actions > Promote Agent to Remote Collector.

Promoting an agent to an Collector deploys new agent plugins on the node that enable the agent to poll other devices.

To see a list of Collectors deployed on your polling engine, click Settings > All Settings > Manage Remote Collectors, or click the Remote Collectors tab on the Manage Agents page.

-

-

Specify nodes you want to poll with the SolarWinds Platform Collector:

-

When discovering nodes on your network with the Network Sonar Wizard, select the SolarWinds Platform Collector in The Scan the network using the drop-down.

-

When adding nodes for monitoring with the Add Node wizard, select the polling method and select the Remote Collector in the Polling Engine drop down.

-

To use the Collector for polling nodes that are already monitored with SolarWinds Platform, go to the node details page, click Edit Node and specify the Collector in the Polling Engine drop-down.

-

Uninstall/Demote Collectors to Agents

You cannot change SolarWinds Platform Collectors back to a SolarWinds Platform Agent. To make Collector from another Agent, uninstall the SolarWinds Platform Collector/Agent from the original server and deploy an Agent to the new target server.

-

Navigate to the Manage Nodes page and Remove or reassign all nodes assigned to the Collector.

-

Navigate to Settings > Polling Engines page and remove the Collector polling engine.

The Delete unused polling engine button appears only when there are no nodes assigned to the Collector.

-

Navigate to the Manage Agents page and delete the Collector agent.

Now you can promote another agent to SolarWinds Platform Collector.

Stack licenses

If your polling engines have enough resources available, you can stack the Additional polling engine licenses for some SolarWinds Platform products, such as NPM. Stacking APE licenses enhances the polling capacity of your main polling engine or APE. A stack requires only one IP address, regardless of the number of APEs.

You can only stack APE licenses. Stacking product licenses is not supported. For example, you cannot stack two NPM SL 2000 licenses and make it an NPM SL 4000.

If the resources on your polling engine are already constrained and you cannot allocate additional resources, consider installing an APE.

How to deploy?

-

Check that all deployed products support this option.

In some deployment types (for example, SAM with node-based licensing), APE licenses are stacked automatically.

-

Make sure that your environment meets the requirements for Additional polling engine in Multi-module system guidelines.

-

Assign multiple licenses to a polling engine with the web-based License Manager. The maximum number of licenses you can apply to a single server depends on the SolarWinds Platform product.

-

In the SolarWinds Platform Web Console, click Settings > All Settings > License Manager.

-

Click Add/Upgrade License, enter the activation key and registration details, and click Activate.

The activated license with activation details displays in the License Manager.

-

In the License Manager, select the license, and click Assign.

-

Select a polling engine, and click Assign.

The license is stacked on the selected polling engine, and its polling capacity is extended.

-

Scalability Engine Guidelines by Product

The following sections provide guidance for using scalability engines to expand the capacity of your SolarWinds installation.

In your SolarWinds Platform deployment, you can use WMI to poll a maximum of 2,100 nodes per polling engine.

SolarWinds Observability Self-Hosted

|

SolarWinds Observability Self-Hosted Scalability Engine Guidelines |

||

|---|---|---|

|

Main polling engine limits |

48,000 elements at standard polling frequencies:

50–100 concurrent SolarWinds Platform Web Console users To monitor more than ~1,000,000 elements, consider using SolarWinds Enterprise Operations Console. When you are not using or evaluating the Log Viewer, the following limits apply:

When using the Log Viewer, the limit is 1,000 events per second (syslogs and SNMP traps combined). |

|

|

Scalability options |

Additional polling engines (APEs) are only available for the Enterprise Scale Edition. Each instance supports up to 100 APEs, with a maximum of 1,000,000 monitored elements per instance. With SolarWinds Observability Self-Hosted, flexible licensing allows you to distribute your license across several instances. Each instance can scale to these limits. There are no licensing restrictions to the number of APEs you can deploy. 2025.4

2025.1 - 2025.2.1

For larger environments, consider using WinRM. See Configure WinRM polling. 2024.4:

These limits are valid for standard polling frequencies. |

|

| SolarWinds Platform Collector | Yes | |

|

WAN and/or bandwidth considerations |

Minimal monitoring traffic is sent between the primary SolarWinds Platform server and any APEs that are connected over a WAN. Most traffic related to monitoring is between an APE and the SolarWinds Platform database. |

|

|

Other considerations |

How much bandwidth does SolarWinds require for monitoring? See SolarWinds Platform server Hardware Requirements in the SolarWinds Platform documentation. |

|

Dameware in Centralized Mode

| Dameware Scalability Engine Guidelines | |

|---|---|

|

Scalability options |

150 concurrent Internet Sessions per Internet Proxy 5,000 Centralized users per Centralized Server 10,000 Hosts in Centralized Global Host list 5 MRC sessions per Console |

Database Performance Analyzer (DPA)

|

DPA Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

Less than 20 database instances monitored on a system with 1 CPU and 1 GB RAM 21 - 50 database instances monitored on a system with 2 CPU and 2 GB RAM 51 - 100 database instances monitored on a system with 4 CPU and 4 GB RAM 101 - 250 database instances monitored on a system with 4 CPU and 8 GB RAM More than 250 database instances monitored through Central Server mode See Link together separate DPA servers in the DPA Administrator Guide |

Engineer's Toolset on the Web

|

Engineer's Toolset on the Web Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

45 active tools per Engineer's Toolset on the Web instance 3 tools per user session 1 active tool per mobile session 10 nodes monitored at the same time per tool 48 interfaces monitored at the same time per tool 12 metrics rendered at same time per tool |

Enterprise Operations Console (EOC)

|

EOC Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

Starting with EOC 2.2, EOC is successfully tested with 100 SolarWinds Sites with a total of 1 million elements (nodes, interfaces, volumes, and so on). Starting with EOC 2020.2.6, EOC supports up to 2 million elements. |

|

WAN and/or Bandwidth Considerations |

Minimal monitoring traffic is sent between the EOC server and any remote SolarWinds Platform servers or APEs. |

| Connectivity | For redundancy, multiple EOC servers can be connected to the same SolarWinds Site. |

| Latency |

SolarWinds recommends that latency between the EOC server and connected SolarWinds Sites be less than 100 ms (both ways). EOC can function at higher latencies, but performance might be affected. EOC was tested with up to 500 ms of latency and remained functional, but performance (specifically with reports) was affected. |

IP Address Manager (IPAM)

|

IPAM Scalability Engine Guidelines |

||

|---|---|---|

|

Scalability options |

3 million IPs per SolarWinds IPAM main polling engine Additional 1 million IPs per APE |

|

| Remote Office Poller | Yes | |

Log Analyzer (LA)

|

LA Scalability Engine Guidelines |

|

|---|---|

|

Scalability limits |

One LA instance can handle up to:

|

NetFlow Traffic Analyzer (NTA)

|

NTA Scalability Engine Guidelines |

|

|---|---|

|

Remote Office Poller |

Yes |

|

Main polling engine limits |

50k FPS per polling engine For more information, see Network Performance Monitor (NPM) |

|

Scalability options |

Up to 300k FPS 50k FPS per polling engine For more information, see Network Performance Monitor (NPM) |

|

WAN and/or bandwidth considerations |

1.5% - 3% of total traffic seen by exporter |

|

Other considerations |

See Flow environment best practices in the NTA Getting Started Guide. |

Network Automation Manager (NAM)

|

NAM Scalability Engine Guidelines |

||

|---|---|---|

|

Main polling engine limits |

48,000 elements at standard polling frequencies:

50–100 concurrent SolarWinds Platform Web Console users To monitor more than ~1,000,000 elements, consider using SolarWinds Enterprise Operations Console. When you are not using or evaluating the Log Viewer, the following limits apply:

When using the Log Viewer, the limit is 1,000 events per second (syslogs and SNMP traps combined). |

|

|

Scalability options |

Additional polling engines (APEs) are stacked automatically. You can poll up to 48,000 elements per server. NAM is licensed by nodes only. It follows the same model as SAM or SCM. For example, NAM 4000 license covers 4,000 nodes. Interfaces and volumes do not consume a node license and therefore are not bound by the 4,000 node limit. Each instance supports up to 100 APEs, with a maximum of 1,000,000 monitored elements per instance. When you add one of the following modules to your NAM environment, you can either use NAM polling engines for these modules or deploy Additional polling engines for these modules:

|

|

| Stackable Polling Engines |

Polling engines are stacked automatically If you are using a license key for each product in the bundle (NAM earlier than 2019.4), scalability limits for individual products apply. |

|

| SolarWinds Platform Collector | Yes | |

|

WAN and/or bandwidth considerations |

Minimal monitoring traffic is sent between the primary SolarWinds Platform server and any APEs that are connected over a WAN. Most traffic related to monitoring is between an APE and the SolarWinds Platform database. |

|

|

Other considerations |

How much bandwidth does SolarWinds require for monitoring? See SolarWinds Platform server Hardware Requirements in the SolarWinds Platform documentation. |

|

Network Configuration Manager (NCM)

|

NCM Scalability Engine Guidelines |

|

|---|---|

|

Remote Office Poller |

Yes |

|

Main polling engine limits |

~10K devices |

|

Scalability options |

Each SolarWinds NCM instance can support up to 100 APEs. A maximum of 100 APEs per instance is supported. Each APE can support ~10K devices. However, the number of devices in the entire environment (the primary engine + all APEs) cannot exceed ~30K. Examples:

Integrated standalone mode |

Network Performance Monitor (NPM)

|

NPM Scalability Engine Guidelines |

|

|---|---|

|

Stackable Polling Engines |

Up to four total polling engines may be installed on a single server, for example one main polling engine with one to three Additional polling engines, or four Additional polling engines on the same server. You can only stack APEs. Stacking product licenses is not supported. By stacking a polling engine 4 times, you can poll up to 48,000 elements per server. A stack requires only 1 IP address, regardless of the number of APEs. |

|

ROP250 supports 250 elements ROP1000 supports 1000 elements |

|

|

Main polling engine limits |

~12k elements at standard polling frequencies:

50–100 concurrent SolarWinds Platform Web Console users To monitor more than ~1,000,000 elements, consider using SolarWinds Enterprise Operations Console. When you are not using or evaluating the Log Viewer, the following limits apply:

When using the Log Viewer, the limit is 1,000 events per second (syslogs and SNMP traps combined). |

|

Scalability options |

One polling engine for every ~12,000 elements. See How is SolarWinds NPM licensed? Starting with Orion Platform 2020.2, a maximum of 100 APEs per instance with up to 1,000,000 elements monitored per instance. |

|

WAN and/or bandwidth considerations |

Minimal monitoring traffic is sent between the primary SolarWinds NPM server and any APEs that are connected over a WAN. Most traffic related to monitoring is between an APE and the SolarWinds Platform database. |

| NetPathTM scalability |

The scalability of NetPath™ depends on the complexity of the paths you are monitoring, and the interval at which you are monitoring them. In most network environments:

|

|

Other considerations |

How much bandwidth does SolarWinds require for monitoring? See SolarWinds Platform server Hardware Requirements in the SolarWinds Platform documentation. |

SolarWinds Platform Agents

|

SolarWinds Platform Agents Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

You can deploy up to 1000 agents per polling engine.

|

Patch Manager

|

Patch Manager Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

1,000 nodes per automation server 1,000 nodes per SQL Server Express instance (SQL Server does not have this limitation) SQL Express is limited to 10 GB storage. For large deployments, SolarWinds recommends using remote SQL. |

Quality of Experience (QoE)

|

QoE Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

|

Security Event Manager (SEM)

|

SEM Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

Up to 864 million events per day (10,000 events per second) 5,000 rule hits per day |

Server & Application Monitor (SAM)

|

SAM Scalability Engine Guidelines |

||

|---|---|---|

| Stackable polling engines |

If using the unified SAM license key (SAM 2020.2 and later) with node-based licensing, polling engines are stacked automatically. You can monitor up to 40,000 component monitors using WMI/WinRM, PowerShell, or SNMP-based monitoring (up to 60,000 components using agent-based monitoring) per server at standard polling frequencies. With component-based licensing, 2 polling engines can be installed on a single server. Stacking is supported. For details, see the SAM licensing model. |

|

| Remote Office Poller | Yes | |

|

Main polling engine limits |

With node-based licensing, ~10K component monitors at standard polling frequencies. This allows space for other main polling engine tasks, such as alerting and reporting. With component-based licensing, ~8-10K component monitors. 50–100 concurrent SolarWinds Platform Web Console users |

|

|

Scalability options |

For SAM 2020.2 and later, with node-based licensing:

For SAM 2020.2 and later, with component-based licensing:

For SAM 2019.4.x and earlier:

For tips on maximizing your polling capacity, see SAM polling recommendations. To extend beyond these component monitor capacities and surface additional monitored data in a single pane of glass, consider purchasing the SolarWinds Enterprise Operations Console. |

|

| SolarWinds Platform Collector | Supported in SAM 2020.2.1 or later, with node-based licensing only. | |

|

WAN and/or bandwidth considerations |

Minimal monitoring traffic is sent between the SolarWinds Platform server and any APEs connected over a WAN. Most traffic related to monitoring is between an APE and the SolarWinds Platform database server. Bandwidth requirements depend on the size of the relevant component monitor. Based on 67.5 KB / WMI poll and a 5-minute polling frequency, the estimate is 1.2 Mbps for 700 component monitors. |

|

Server Configuration Monitor (SCM)

|

SCM Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

A SolarWinds Platform instance with SCM installed can process up to 280 changes/second combined. |

Serv-U FTP Server and MFT Server

|

Serv-U FTP Server and MFT Server Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

500 simultaneous FTP and HTTP transfers per Serv-U instance 50 simultaneous SFTP and HTTPS transfers per Serv-U instance For more information, see the Serv-U Distributed Architecture Guide. |

Storage Resource Monitor (SRM)

|

SRM Scalability Engine Guidelines |

|

|---|---|

|

Stackable Polling Engines |

No, one APE instance can be deployed on a single host |

|

Remote Office Poller |

Yes Poller remotability is a feature enabling the local storage, of up to ~1 GB of polled data per poller in case the connection between the polling engine and the database is temporarily lost. |

|

Main polling engine limits |

Maximum of 40K LUNs per polling engine (primary or additional) 50–100 concurrent SolarWinds Platform Web Console users |

|

Scalability options |

Use APEs for horizontal scaling The upper limit that can be handled by a single SRM instance is 160K LUNs. For larger environments, please contact SolarWinds for further assistance. |

|

WAN and/or bandwidth considerations |

Minimal monitoring traffic is sent between the primary SRM server and any APEs that are connected over a WAN. Most traffic related to monitoring is between an APE and the SolarWinds database. |

User Device Tracker (UDT)

|

UDT Scalability Engine Guidelines |

|

|---|---|

|

Remote Office Poller |

Yes |

|

Main polling engine limits |

100k ports |

|

Scalability options |

1 APE per 100k additional ports Maximum of 500k port per instance (1 Primary Polling Engine and 4 APEs) |

|

WAN and/or bandwidth considerations |

None |

|

Other considerations |

UDT version 3.1 supports the ability to schedule port discovery In UDT version 3.1 the Max Discovery Size is 2,500 nodes/150,000 ports |

Virtualization Manager (VMAN)

|

VMAN Scalability Engine Guidelines |

|

|---|---|

|

Scalability options |

1 APE per 10000 monitored virtual machines For the VMware events feature, VMAN supports a flat amount of 1000 VMware events per second, regardless of deployment size and number of APEs. Important: VMware events utilize the Log Viewer and count toward Log Viewer/Log Analyzer's 1000 EPS limit. |

| Main polling engine system requirements |

The main polling engine should be upgraded to meet greater polling demands as the virtual environment increases in size. See the VMAN Deployment Sizing Guide. |

| Deployment Sizing Guide | For VMAN-specific sizing and scaling guidelines, see the VMAN Deployment Sizing Guide. |

VoIP & Network Quality Manager (VNQM)

|

VNQM Scalability Engine Guidelines |

|

|---|---|

|

Remote Office Poller |

Yes |

|

Main polling engine limits |

~5,000 IP SLA operations ~200k calls/day with 20k calls/hour spike capacity |

|

Scalability options |

1 APE per 5,000 IP SLA operations and 200,000 calls per day Maximum of 15,000 IP SLA operations and 200,000 calls per day per SolarWinds VNQM instance (SolarWinds VNQM + 2 VNQM APEs) |

|

WAN and/or Bandwidth Considerations |

Between Call Manager and VNQM: 34 Kbps per call, based on estimates of ~256 bytes per CDR and CMR and based on 20k calls per hour |

Web Help Desk (WHD)

|

WHD Scalability Engine Guidelines |

|

|---|---|

| Deployments with fewer than 20 techs |

You can run Web Help Desk on a system with:

This configuration supports 10 - 20 tech sessions with no onboard memory issues. To adjust the maximum memory setting, edit the |

|

Deployments with more than 20 techs |

If your deployment will support more than 20 tech sessions, SolarWinds recommends installing Web Help Desk on a system running:

To enable the 64-bit JVM, add the following argument to the

To increase the max heap memory on a 64-bit JVM, edit the For other operating systems, install your own 64-bit JVM and then update the |

Web Performance Monitor (WPM)

|

WPM Scalability Engine Guidelines |

|

|---|---|

|

Not directly supported, but recordings may be made from multiple locations. |

|

|

Main polling engine limits |

12 monitored transactions per WPM Player. See the next row for details. |

|

Scalability options |

SolarWinds recommends one transaction location per 12 monitored transactions. You can use the Player Load Percentage widget to estimate the number of transactions assigned to a machine that hosts a WPM Player. Many factors are involved, including:

If you notice a high load percentage, consider increasing the time intervals between polls and/or adding more players to a given location to distribute loads more evenly. See Player Load Percentage in THWACK to learn more. |

Frequently Asked Questions

Does each module have its own polling engine?

A standard, licensed APE may have all relevant modules installed on it, and it performs polling for all installed modules. An APE works the same way as your main polling engine on your main server. For example, if you have NPM and SAM with component-based licensing installed, install one APE and it performs polling for both NPM and SAM.

For products that do not require an extra license for an APEAPE, including LA, SAM (node-based licensing only), SCM, SRM, and VMAN, the APE only polls data for the product they're included with, along with SolarWinds Platform data. For example, a SAM APE returns SAM data and basic node metrics provided by the SolarWinds Platform such as status and volume, but not NPM-specific data such as interfaces.

Are polling limits cumulative or independent? For example, can a single polling engine poll 12k NPM elements AND 10k SAM monitors together?

Yes, a single polling engine can poll up to the limits of each module installed, if sufficient hardware resources are available.

Are there different size license available for the Additional polling engine?

No, the APE is available with an unlimited license.

Can you add an Additional polling engine to any size module license?

Yes, you can add an APE to any size license.

Adding an APE does not increase your license size. For example, if you are licensed for an NPM SL100, adding an APE does not increase the licensed limit of 100 nodes/interfaces/volumes, but the polling load is spread across two polling engines.

What happens if the connection from a polling engine to the SolarWinds Platform database server is lost?

If there is a connection outage to the SolarWinds Platform database server, polling engines cache the polled data on the APE servers.

The amount of data that can be cached depends on the disk space available on the polling engine server. The default storage space is 2 GB per polling queue.

When the connection to the database is restored, the SolarWinds Platform database server is updated with the locally cached data, the oldest data is processed first.

If the database connection is broken for a longer than an hour, the collector queue becomes full, the oldest data is discarded until a connection to the database is re-established.